Coding with AI: Shortcut to Success or Road to Mediocrity?

The Genuine Improvement

Let's start with what's actually true: AI coding tools have made good engineers significantly more productive. Not marginally more productive — significantly. Tasks that used to take hours take minutes. Boilerplate that required careful attention can be generated and reviewed in seconds. The mental overhead of context-switching between languages or frameworks has dropped dramatically.

For small engineering teams at startups, this is genuinely transformative. A team of three can now do what used to require a team of eight. The economic implications of that are enormous.

The New Failure Mode

But here's what nobody talks about enough: AI coding tools are also making it possible for mediocre engineers to produce large volumes of mediocre code very quickly.

That sounds obvious, but the implications are subtle. In the pre-AI era, a weak engineer's output was limited by their typing speed. Now it's limited by their judgment — their ability to recognise when AI-generated code is subtly wrong, architecturally unsound, or solving the wrong problem.

Judgment is harder to assess in interviews than technical skill. And AI-generated code often looks correct until it breaks in production.

Where Judgment Matters Most

There are specific places in a codebase where the difference between good and mediocre engineering judgment is most consequential:

Architecture decisions — AI tools are good at generating code within a given architecture, but they can't tell you whether the architecture itself is right for your scale, your team, or your use case.

Security boundaries — AI-generated code often handles the happy path correctly while leaving security vulnerabilities in error paths, edge cases, and admin interfaces.

Data model design — Getting a data model wrong early is expensive. AI tools tend to generate data models that work for the demo but don't scale gracefully.

Evaluation for AI features — If you're building AI features, you need to know how to evaluate whether they're working. AI coding tools don't know how to set up evaluation infrastructure; that requires domain knowledge.

How to Use AI Tools Well

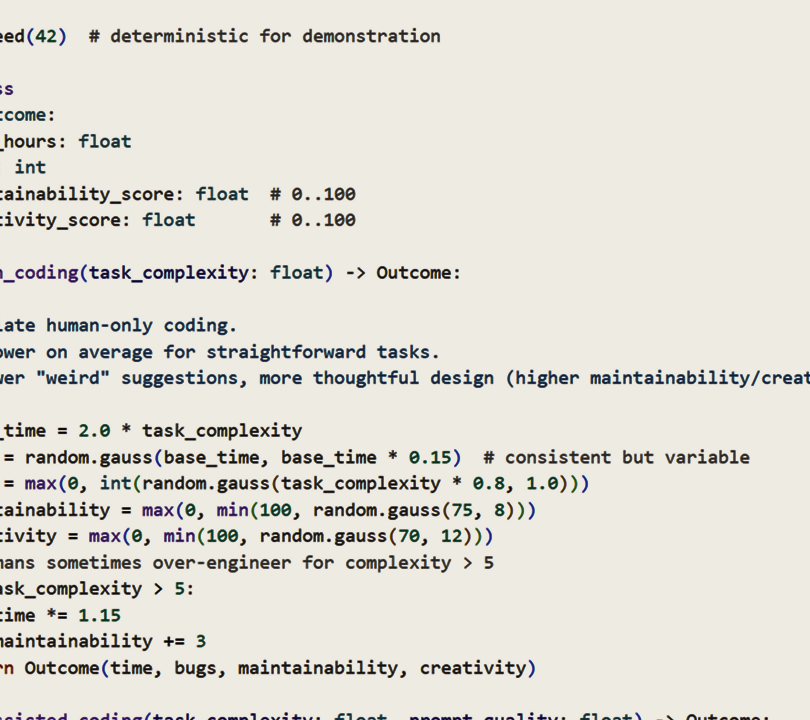

The best engineers we've worked with use AI tools as accelerators for the parts of development that don't require judgment, so they can spend their judgment on the parts that do. They use AI to generate tests, write documentation, scaffold boilerplate, and explore unfamiliar APIs — then they review and refine.

The worst use of AI tools is using them to avoid thinking. The tool will generate code. The code will often work in isolation. The system will fail in ways that are hard to diagnose and harder to fix.

The Mediocrity Trap

The shortcut to success is real. But so is the road to mediocrity. Which path you end up on depends almost entirely on the judgment of the engineers using the tools — not the tools themselves.

If you're hiring engineers who rely on AI tools to compensate for weak fundamentals, you're accumulating technical debt at a rate that wasn't previously possible. And you won't see the bill until it's very expensive to pay.